How to Harden Your MCP AI Agent Pipelines Against Supply Chain Attacks

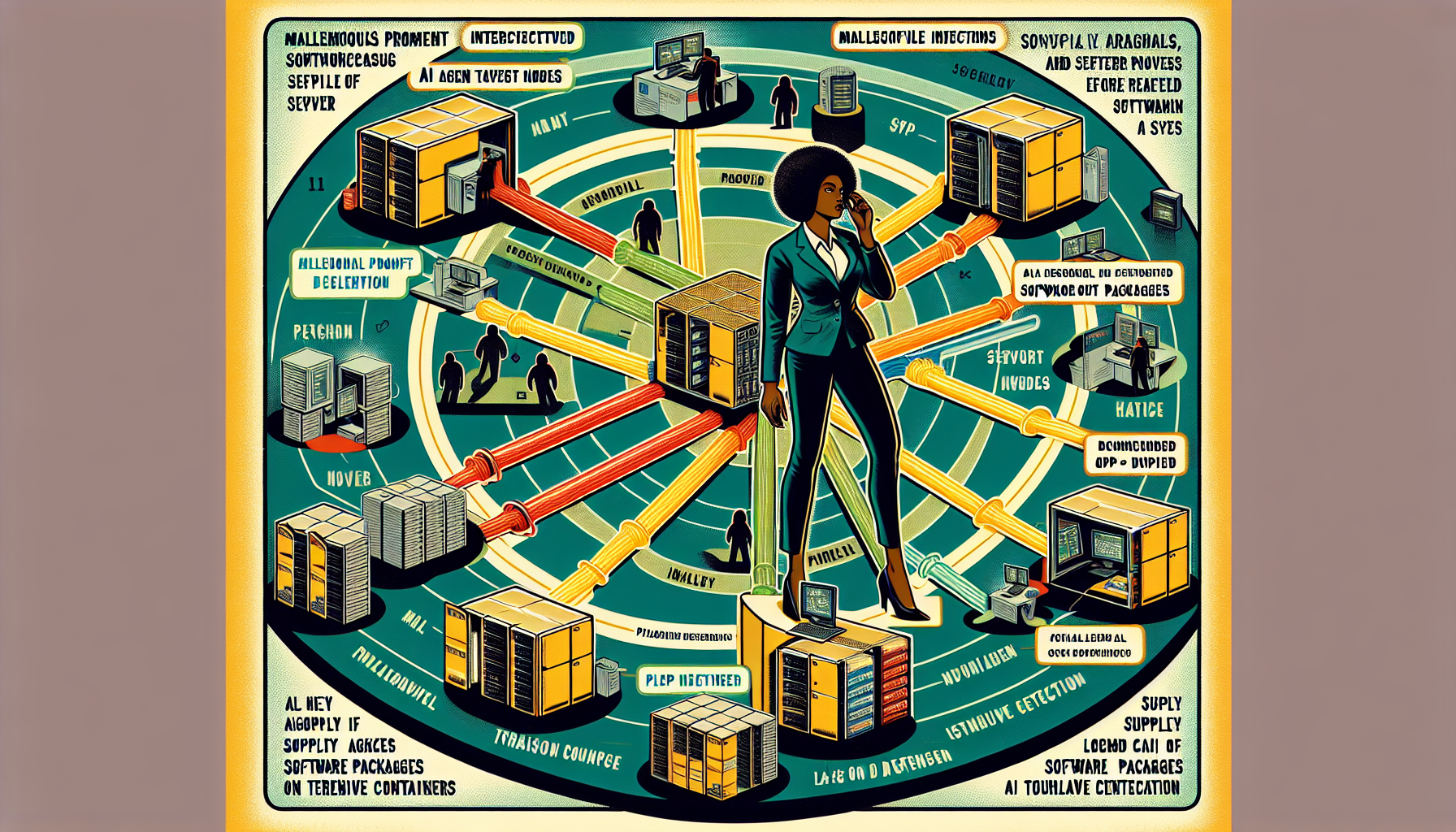

Security researchers have published a comprehensive hardening playbook for MCP-powered AI agent pipelines, covering prompt injection, tool poisoning, and supply chain risk for agentic workflows.

AI Agent Pipelines Are the New Attack Surface — Here Is How to Harden Them

The Model Context Protocol (MCP) has quietly become the backbone of the agentic AI ecosystem. Created as an open standard for connecting AI models to external tools, databases, and services, MCP has accumulated over 150 million downloads, 7,000-plus publicly accessible servers, and integration into hundreds of active developer projects. As AI agents become production workloads rather than research demos, the security architecture of the systems they run on has become a topic every developer building with MCP needs to understand.

Security researchers published a comprehensive analysis of MCP attack vectors in mid-April 2026, identifying three primary risk categories and — importantly — a practical hardening checklist that developers can act on. This piece covers what each category means and what a secured MCP deployment looks like in practice.

The Three Attack Vectors to Understand

1. Prompt Injection Through Tool Responses

Prompt injection in an agentic context is different from classic prompt injection against a chat interface. When an AI agent calls a tool and receives a response, that response becomes part of the model's context — and a malicious actor controlling a data source can inject instructions into that response that the model may execute.

The defense is explicit tool output validation: treat tool responses as untrusted input, validate their structure, and include system prompt instructions that explicitly tell the model not to follow instructions embedded in tool responses. This is the MCP security equivalent of parameterized queries for SQL injection — a discipline rather than a one-time fix.

2. Tool Poisoning in Multi-Agent Environments

As AI workflows become multi-agent — where one AI model orchestrates others, each with their own tool access — the question of trust between agents becomes critical. A compromised or malicious tool in a pipeline can return responses designed to manipulate downstream agent behavior.

The defensive architecture is capability minimization: each agent in a pipeline should have access only to the tools and data it needs for its specific role. An agent that reads a database should not have write access. An agent processing user inputs should not have access to internal APIs. The principle of least privilege that has governed secure system design for decades applies directly to agentic AI pipelines.

3. Supply Chain Risk in MCP Server Deployments

MCP servers are software packages that developers install and run. Like any software supply chain, MCP server packages can be tampered with, typosquatted, or compromised after initial deployment. Researchers identified over 200 open source MCP projects with varying security postures across the ecosystem.

Practical defenses:

- **Audit installed MCP servers** — review what packages are in your MCP server inventory and when they were last verified

- **Pin specific package versions** to avoid silent updates introducing new behavior

- **Run MCP servers in isolated environments** — containers or VMs with scoped permissions, minimal filesystem access, and restricted network connectivity

- **Monitor MCP server output** for unexpected patterns rather than treating tool responses as inherently trusted

Building a Hardened MCP Deployment

Translating the April 2026 research into a practical implementation checklist:

1. **Sanitize tool outputs before they re-enter model context** — validate structure, strip or escape unexpected content, and treat external data with the same skepticism you would apply to user input

2. **Scope system prompts defensively** — explicitly instruct the model not to act on instructions found in tool responses, external databases, or retrieved content

3. **Use environment isolation** — run MCP server processes with minimal system privileges and no unnecessary network connectivity beyond what the tool explicitly requires

4. **Implement logging and monitoring** — capture what tools are being called, what they return, and flag anomalies; agentic workflows calling unexpected tools or producing unusual output patterns are worth investigating

5. **Treat multi-agent trust boundaries explicitly** — when one AI agent calls another, that call should be authenticated and authorized, just like any service-to-service API call in a conventional microservices architecture

Resources for AI-Security-Minded Developers

The security research community has moved quickly to document and categorize MCP risks. Resources worth bookmarking:

- The VulnerableMCP project maintains a continuously updated database of known MCP security issues

- Palo Alto Unit 42 published a detailed analysis of MCP sampling attack vectors

- Red Hat's security team published a practical risk and controls guide for MCP deployments in enterprise environments

The Good News: The Community Is Ahead of the Problem

The positive development in all of this is timing. These agentic AI vulnerabilities were identified and documented before they were exploited at significant scale. The MCP security research community is building the defensive toolkit that developers need while the attack surface is still new and before adversarial actors have fully mapped it.

AI agent pipelines represent a genuinely new security category. The developers and security teams who engage with MCP security practices now will be in a fundamentally stronger position than those who treat agentic security as a future concern.

Sources: Infosecurity Magazine (April 15, 2026), IT Pro (April 2026), OX Security Blog (April 2026), Palo Alto Unit 42 (April 2026), Practical DevSecOps (April 2026), Red Hat Security Blog (April 2026)