Exabeam Now Monitors AI Agents Like It Monitors Human Users — With Behavioral Baselines

Exabeam's April 2026 New-Scale update introduces Agent Behavior Analytics, treating ChatGPT, Gemini, and Copilot integrations as first-class security identities requiring behavioral monitoring.

The Security Gap Nobody Is Talking About Enough

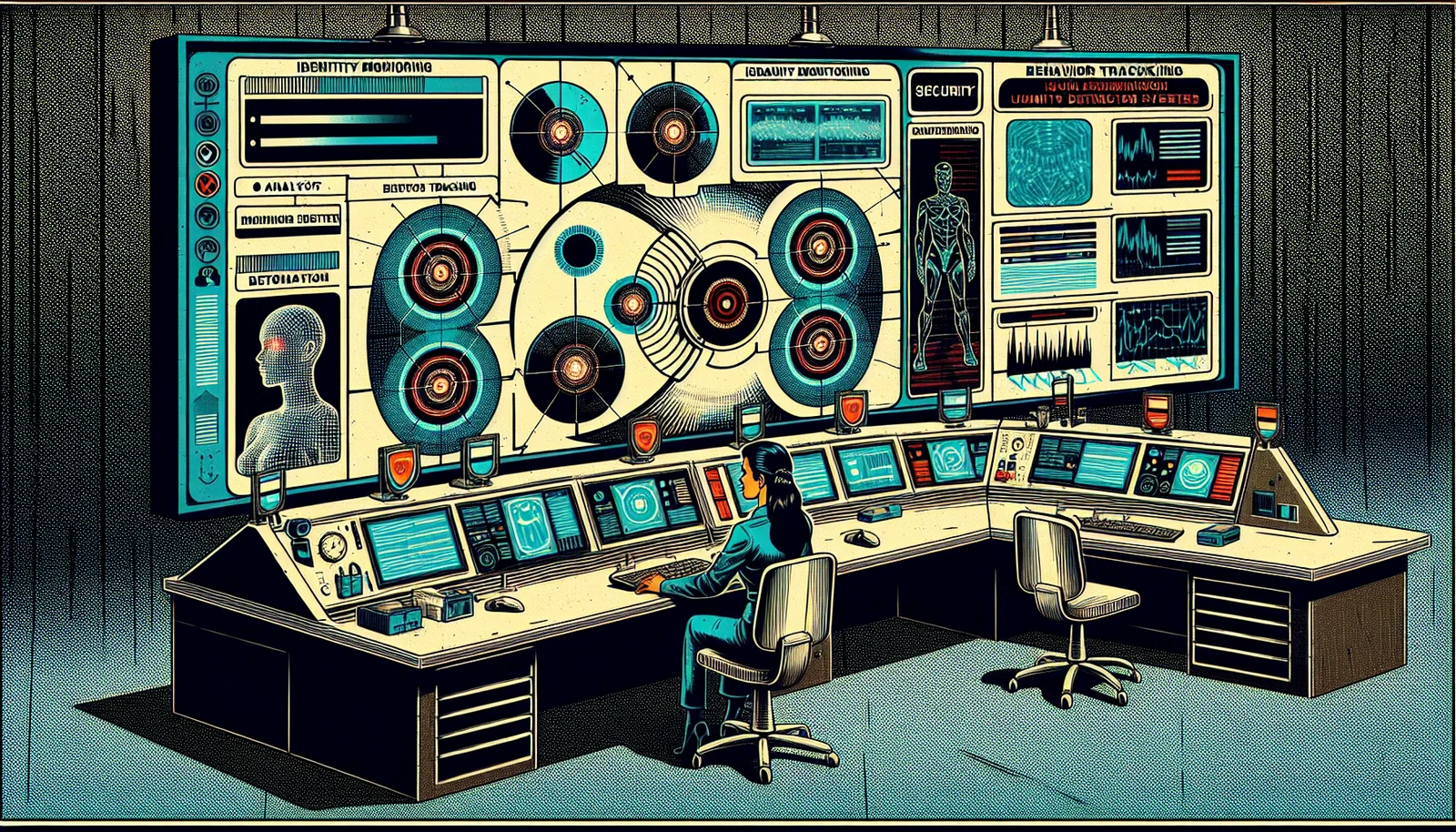

Your organization has deployed ChatGPT Enterprise, Microsoft Copilot, and possibly custom AI agents with access to internal systems, email, calendars, and code repositories. Your SIEM is monitoring your human users' behavioral patterns. But who is monitoring the AI agents?

That gap — AI agents operating with privileged access to enterprise systems but without behavioral baselines, anomaly detection, or identity-centric monitoring — is exactly what Exabeam is addressing with the April 2026 release of Agent Behavior Analytics (ABA) within its New-Scale Analytics platform.

What Agent Behavior Analytics Does

The core concept extends the behavioral analytics that security teams already use for human users to the AI agents operating alongside them. An employee who suddenly starts accessing systems they have never touched before triggers a behavioral alert. An AI agent doing the same thing should too.

Exabeam's ABA implementation dynamically baselines normal behavior for AI agents integrated with the organization's systems — specifically targeting ChatGPT, Google Gemini, and Microsoft Copilot deployments. The behavioral model tracks three key dimensions:

Token usage patterns — what volumes and types of prompts are being processed, and whether that changes significantly over time. API call sequences — which internal systems the agent is accessing, in what order, and at what frequency. Outbound data communication — what data is leaving the organization through AI agent interactions.

When observed behavior deviates from the established baseline, the platform flags the anomaly for security review. The system treats AI agents as security identities in their own right — not as just another application, but as entities with behavioral patterns that can be monitored, baselined, and assessed for risk.

The OWASP Agentic Top 10 Connection

Exabeam has aligned the ABA detection rule set to the OWASP Agentic Top 10 — a framework specifically addressing the unique risk categories posed by AI agents with autonomous access to systems and data. This alignment gives security teams a structured, industry-recognized vocabulary for discussing and documenting AI agent risk — one that maps to existing governance frameworks rather than requiring entirely new tooling.

The OWASP Agentic Top 10 addresses risks including prompt injection, insecure tool execution, excessive agency, and supply chain vulnerabilities specific to AI agent deployments. Detection rules mapped to this framework mean that alerts generated by ABA correspond directly to recognized risk categories that compliance teams can act on with established playbooks.

Why This Is the Right Moment

The timing aligns precisely with where the enterprise security landscape stands in 2026. AI agents with access to internal systems are no longer a future concern — they are deployed today in most enterprise environments. The question has shifted from "should we monitor AI agents?" to "how do we do it effectively without rebuilding our entire security stack?"

Exabeam's approach extends existing SIEM and UEBA infrastructure rather than requiring a separate AI-specific monitoring platform. For security teams already running Exabeam, ABA is an additive capability rather than a greenfield deployment — the right architectural decision. The tooling security teams know and trust should expand to cover the new identities operating in their environment, not be replaced by something unfamiliar.

Sources: Exabeam Blog (April 1, 2026), OWASP Agentic Top 10 (2026), SiliconAngle (April 2026)